Resilient Distributed Dataset (RDD)

Before we discuss Resilient Distributed Dataset , lets see how do we launch Spark?

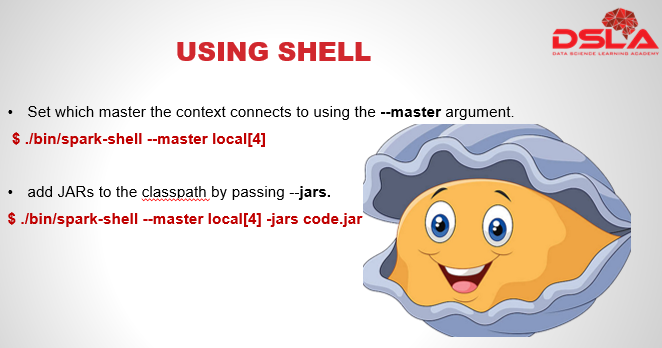

A Spark shell executable file is usually present in Spark version folder which in turn is present under the “opt” folder, but it may vary according to the stack being used. Commonly used Big data stacks are Cloudera, Hortonworks and MapR. The first command shown in the slide is a generalized command used to start spark.

Where “master” is the mode in which we wish to start the shell, here we are using local mode with 4 cores.

We can also add Jars to the classpath.The 2nd code in the slide represents launching of spark shell in local mode with 4 cores, and additional jars.

Spark shell automatically initiates a spark context at the time of its launch with variable “sc”. SparkContext is the main entry point of Spark program.

This also means one would not be able to create a new one.

But, one can add different variations to the launch by specifying which master to use? Which jars or repositories to add.. etc..

Here are few more examples for launching spark shell with more properties like with added packages and repositories.Also we have a “help” option to list out all the options for spark.

- add dependencies (e.g. Spark Packages) to your shell session using —packages.

$ ./bin/spark-shell –master local[4] –packages “org.example:example:0.1 “

- additional repositories where dependencies might exist can be passed to the —repositories.

- Use spark-shell –help for complete list of options.

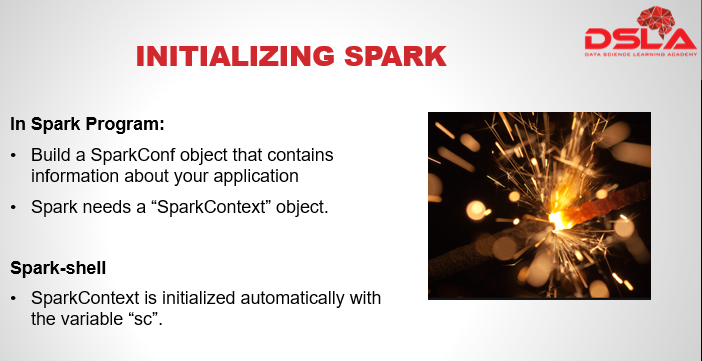

Initializing spark:

While writing a spark program, an important requirement is a SparkContext object, which tells Spark how and where to access a cluster. And to create a SparkContext one needs to build a SparkConf object that contains information about your application. As mentioned before, a spark-shell automatically initializes spark context while launching a shell.

We have an example here to show how sparkconf and spark context is initialized while writing a spark program.

val conf = new SparkConf().setAppName(appName).setMaster(master)

new SparkContext(conf)

Points to remember:

Point 1: The name set under appname parameter is a just to identify the process or program in the Cluster UI.

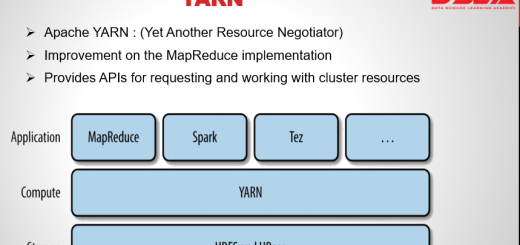

Point 2: Cluster Manager available in Spark are: Mesos or YARN cluster URL or a special “local” string to run in local mode

What is a Resilient Distributed Dataset ?

Spark revolves around the concept of a resilient distributed dataset (RDD).

RDD is the fundamental data abstraction of Apache Spark which are an immutable collection of objects which is capable of performing computation on different nodes of the cluster. Each and every dataset in Spark RDD is logically partitioned across many servers so that they can be computed in a distributed manner.

Lets understand RDD by decomposing its name one by one.

First we have R – Resilient, like the name suggests RDDs show resilience, referring to their ability of self recovery in case of failure by re-computing the lost or damaged data using lineage graph i.e. Directed Acyclic Graph (DAG).

Next we have D for Distributed, which refers to the logical partitioning of Data across many servers and nodes for computational purpose.

Last D is the for the dataset used.