History of Hadoop and its comparison to other systems

History Of Hadoop and its Comparison to Other Systems

Hadoop isn’t the first distributed system for data storage and analysis, but it has some unique properties that set it apart from other systems and some that may seem similar. Here we look at some of them.

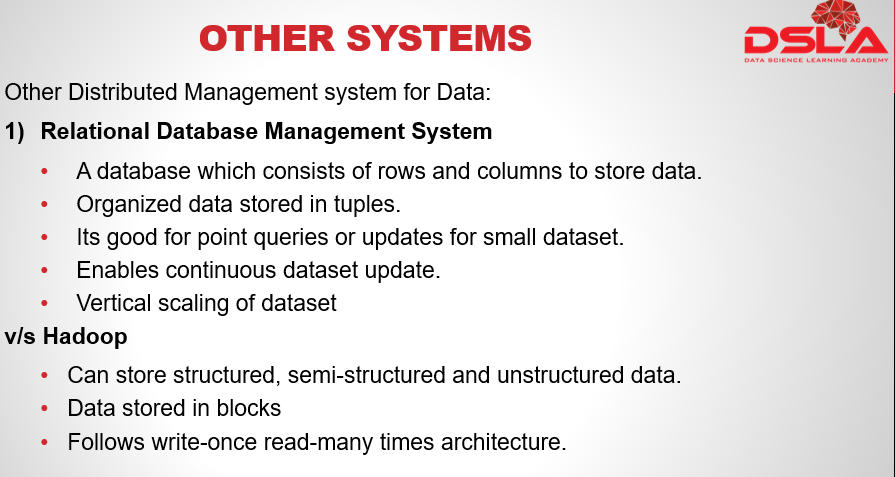

Most popularly used is: RDBMS – Relational Database Management System.

RDBMS is a database which is used to store data in the form of tables comprising of several rows and columns. Due to the collection of organized set of tables, data can be accessed easily in RDBMS. RDBMS works better when the volume of data is low. It is considered to be good for point queries or updates, where the dataset has been indexed to deliver low-latency retrieval and update times of a relatively small amount of data. handles structured data. relational database is good for datasets that are continually updated.In contrast, MapReduce is a good fit for problems that need to analyze the whole dataset in a batch fashion, particularly for ad hoc analysis. It shines exceptionally in semi-structured and unstructured data. can handle data up to petabytes of size. MapReduce suits applications where the data is written once and read many times.

HPC and grid computing communities have been doing large-scale data processing for years. Broadly, the approach is to distribute the work across a cluster of machines, which access a shared filesystem, hosted by a storage area network (SAN). This works well for predominantly compute-intensive jobs, but it becomes a problem when nodes need to access larger data volumes (hundreds of gigabytes, the point at which Hadoop really starts to shine). Hadoop tries to co-locate the data with the compute nodes, so data access is fast because it is local. This feature, known as data locality, it is at the heart of data processing in Hadoop and is the reason for hadoops good performance. Distributed processing frameworks like MapReduce spare the programmer from having to think about failure, since the implementation detects failed tasks and reschedules replacements on machines that are healthy.

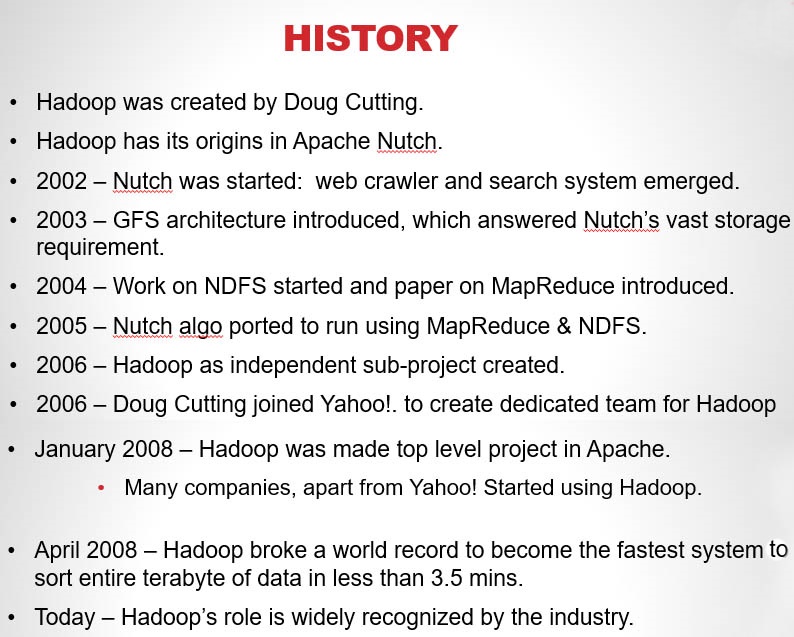

Lets now have a brief look at the history of Hadoop. Hadoop was created by Doug Cutting. Hadoop has its origins in Apache Nutch, an open source web search engine.

Nutch was started in 2002, then a working crawler and search system quickly emerged. However, its creators realized that this architecture wouldn’t scale to the billions of pages on the Web, because of its vast storage needs.

In 2003 a paper on GFS – Google’s distributed filesystem, architecture was released. which would solve the storage needs of very large files generated as a part of the web crawl and indexing process.

In 2004, work on Nutch Distributed Filesystem – existing started.

also In 2004, Google published the paper that introduced MapReduce to the world.

Then the Nutch developers introduced a working MapReduce implementation in Nutch, and later that year all the major Nutch algorithms had been ported to run using MapReduce and NDFS.

in February of 2006, a new independent subproject called Hadoop was created. around the same time doug cutting joined yahoo which provided a dedicated team and resources to turn hadoop into a system that ran at web scale.

In January 2008, Hadoop was made its own top-level project at Apache. and By this time, Hadoop was being used by many other companies besides Yahoo!, such as facebook and new york times

In April 2008, Hadoop broke a world record to become the fastest system to sort an entire terabyte of data in less than 3.5 mins.

Today, Hadoop is widely used in mainstream enterprises. Hadoop’s role as a general purpose storage and analysis platform for big data has been recognized by the industry.