Yarn Comparison to MapReduce

Major Difference between map reduce 1 and mapreduce2 i.e YARN.

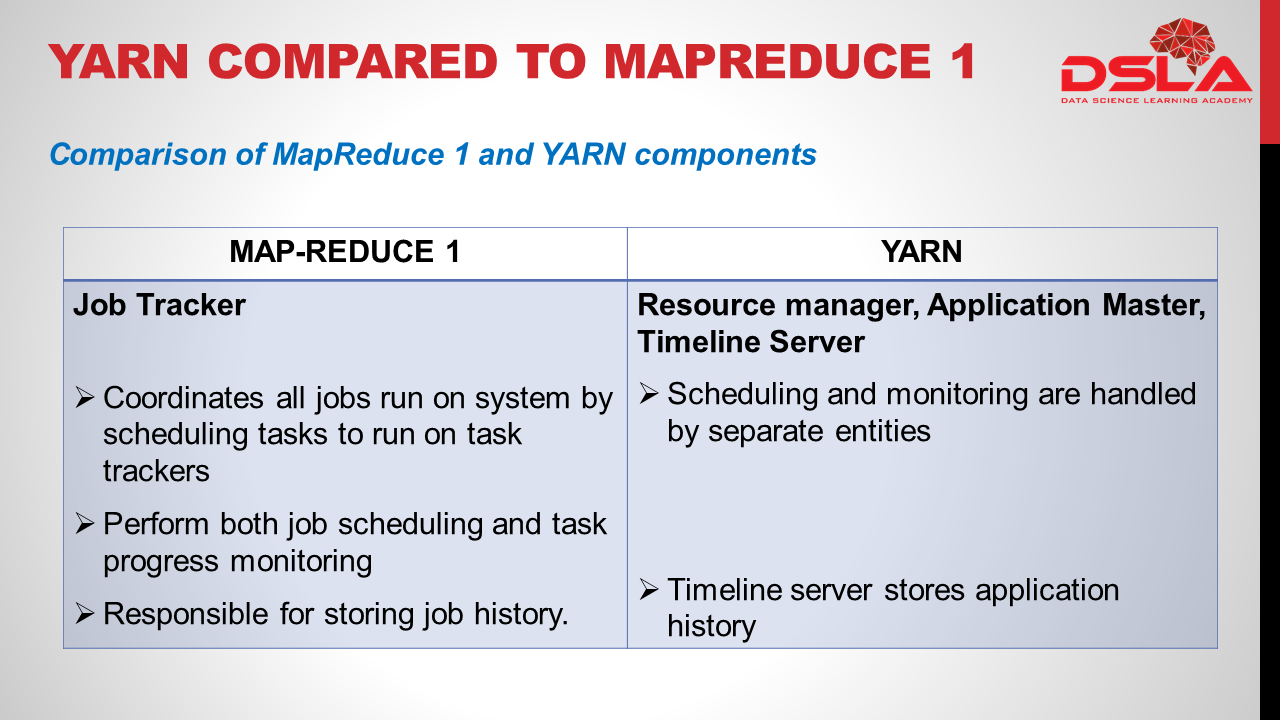

In MapReduce 1, there are two types of daemon that control the job execution process: a jobtracker and one or more tasktrackers.

The jobtracker coordinates all the jobs run on the system by scheduling tasks to run on tasktrackers. , the jobtracker takes care of both job scheduling and task progress monitoring i.e . keeping track of tasks, restarting failed or slow tasks, and doing task bookkeeping, such as maintaining counter totals and it is also responsible for storing job history for completed jobs.

By contrast, in YARN these responsibilities are handled by 3 separate entities: the resource manager, application master and Timeline Server (one for each MapReduce job).

Scheduling and monitoring are handled by Resource manager and application master. While in YARN, the equivalent role is the timeline server, which stores application history.

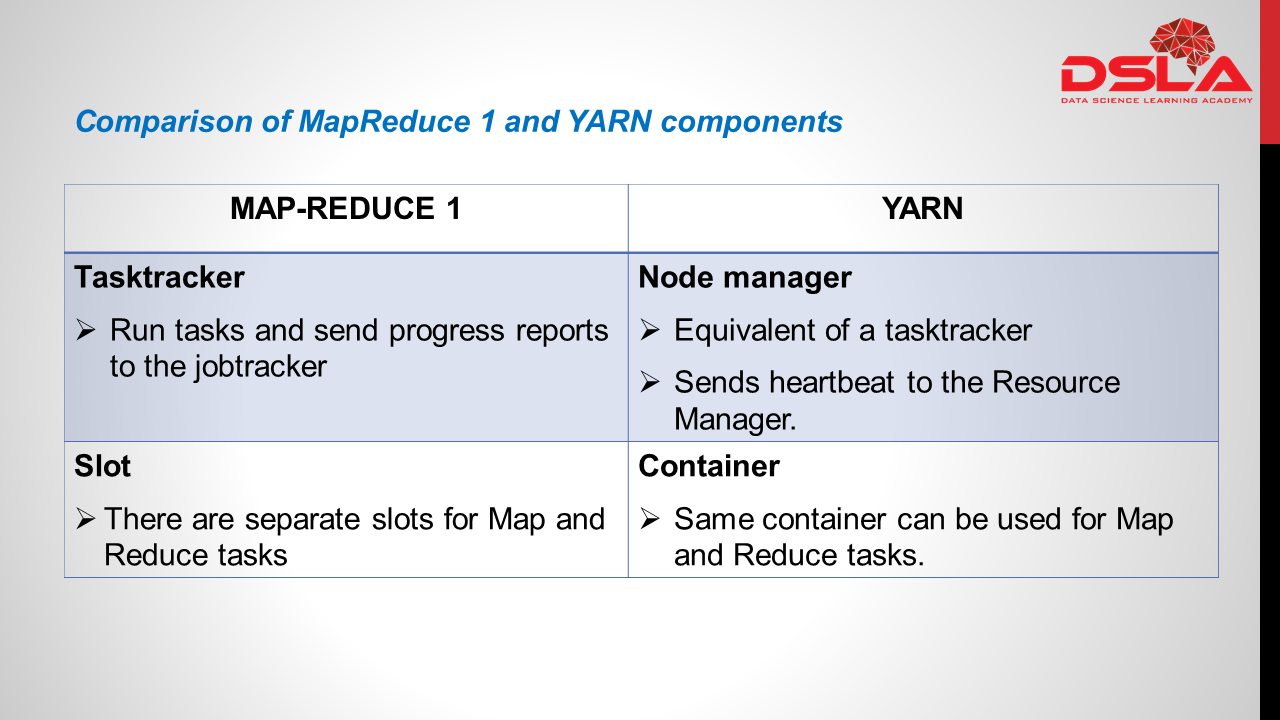

Task tracker:

Tasktrackers run tasks and send progress reports to the jobtracker, which keeps a record of the overall progress of each job. If a task fails, the jobtracker can reschedule it on a different tasktracker. The YARN equivalent of a tasktracker is a node manager. Periodically, its sends an heartbeat to the resource manager.Mapreduce 1 works on the concepts of slots – slots can run either a Map task or a Reduce task only.Whereas YARN Works on concepts of containers. Containers can run generic tasks. Same container can be used for Map and Reduce tasks leading to better utilization.

Now, we will see the benefits of using YARN over mapreduce

1) First is scalability : YARN can run on larger clusters than MapReduce 1. MapReduce 1 hits scalability bottlenecks in the region of 4,000 nodes and 40,000 tasks, stemming from the fact that the jobtracker has to manage both jobs and tasks. YARN overcomes these limitations by virtue of its split resource manager/application master architecture: it is designed to scale up to 10,000 nodes and 100,000 tasks.

2) High availability (HA): It is usually achieved by replicating the state needed for another daemon to take over the work needed to provide the service, in the event of the service daemon failing. With the jobtracker’s responsibilities split between the resource manager and application master in YARN, Yarn provide HA for the resource manager, then for YARN applications (on a per-application basis). (CONTINUED NEXT)

3). Utilization: In MapReduce 1, each tasktracker is configured with a static allocation of fixed-size “slots,” which are divided into map slots and reduce slots at configuration time. A map slot can only be used to run a map task, and a reduce slot can only be used for a reduce task.

In YARN, a node manager manages a pool of resources, rather than a fixed number of designated slots. MapReduce running on YARN will not hit the situation where a reduce task has to wait because only map slots are available on the cluster, which can happen in MapReduce 1. If the resources to run the task are available, then the application will be eligible for them.

4). Multitenancy: MapReduce is just one YARN application among many. It is even possible for users to run different versions of MapReduce on the same YARN cluster, which makes the process of upgrading MapReduce more manageable.